Specifications from Demonstrations

Prediction, Learning, and Teaching

Marcell Vazquez-Chanlatte

PhD Candidate at UC Berkeley

Committee: Sanjit Seshia, Shankar Sastry, Anca Dragan, Steven Piantadosi

2022-05-09

2022-05-09

What goals & assumptions are implicit in the way we act?

Three types of motivating applications.

Share road with many actors

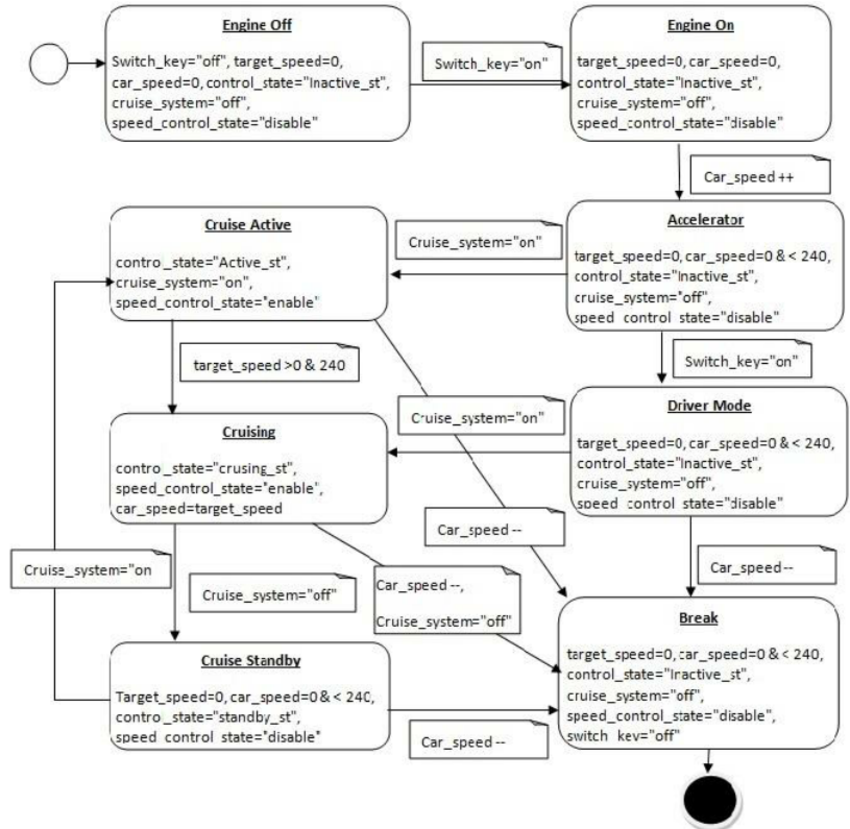

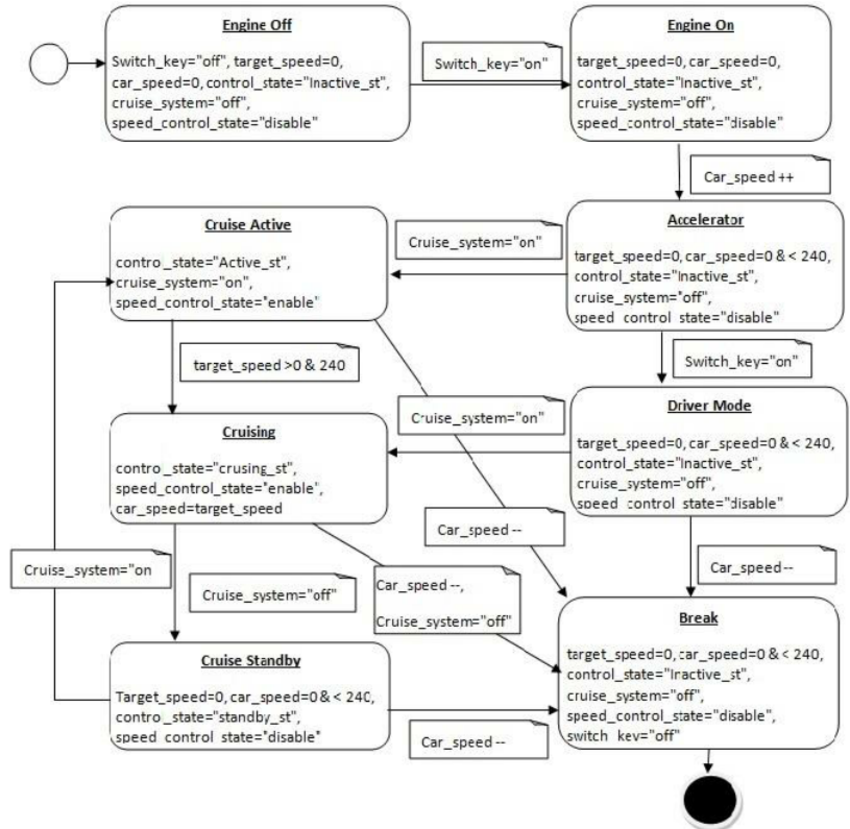

Detecting Mode Confusion + Unused Features

Nobody reads the manual...

Specializing on the fly

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

How to infer a task?

Many different signals to use for inference

Decades of research in learning rewards from demonstrations

Decades of research in learning rewards from demonstrations

Inverse Reinforcement Learning

Given a series of demonstrations, what reward, $r(s)$, best explains the behavior? (Abbeel and Ng 2004)

Consider an agent acting in the following stochastic grid world.

Can try to move up, down, left, right

May slip due to wind

What is the agent trying to do?

Probably trying to reach yellow tiles

Although these actions are surprising under that hypothesis

And isn't it easier to go to this yellow tile any way?

A lot of information from a single incomplete demonstration

Communication through actions

-

Essential for interpreting other signals: (Language, Disengagments, Other agents)

Goodman, et al. "Pragmatic language interpretation as probabilistic inference." TiCS `16

McPherson, et al. "Modeling supervisor safe sets for improving collaboration in human-robot teams." IROS `18

Afolabi, et al. "People as sensors: Imputing maps from human actions." IROS `18.

Communication through actions

-

Essential for interpreting other signals: (Language, Disengagments, Other agents)

Goodman, et al. "Pragmatic language interpretation as probabilistic inference." TiCS `16

McPherson, et al. "Modeling supervisor safe sets for improving collaboration in human-robot teams." IROS `18

Afolabi, et al. "People as sensors: Imputing maps from human actions." IROS `18. -

Actions are incredibly diagnostic.

Dragan, et al, "Legibility and predictability of robot motion." HRI `13.

Ho, et al, "Showing versus doing: Teaching by demonstration". NIPS `16

Sadigh, et al. "Planning for autonomous cars that leverage effects on human actions." RSS `16.

How should we represent learned tasks?

Desired Properties

- Decouple task from dynamics.

- Prefer sparse rewards.

- Support describing temporal tasks.

Abel, et al. "On the Expressivity of Markov Reward.", NeurIPS `21.

- Support composition.

Markovian Rewards couple environment with task

Reach yellow. Avoid red.

Taking away top left yellow causes error

Reach yellow. Avoid red.

Solution: Sparse reward + memory

Reach yellow. Avoid red.

Solution: Sparse reward + memory

Reach yellow. Avoid red.

Key question: What memory?

Solution: Sparse reward + memory

Reach yellow. Avoid red.

Key question: What memory?

But first: How to represent task?

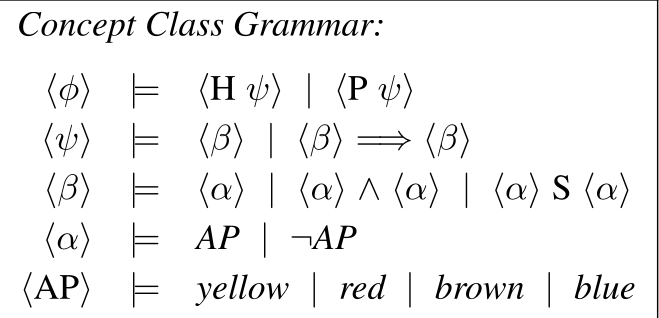

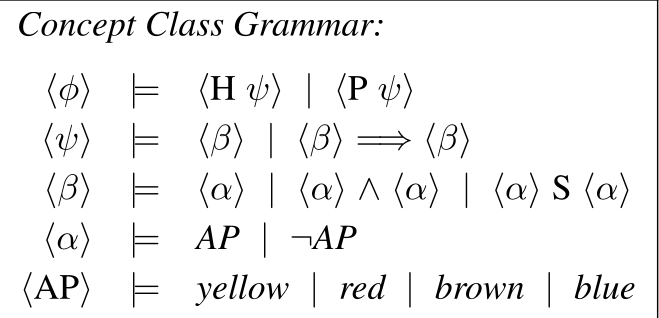

Proposal: Tasks as Boolean Specifications

-

A (Boolean) specification,

$\varphi$, is a set of traces.

- We say $\xi$ satisfies $\varphi$, if $\xi \in \varphi$.

- $[\xi \in \varphi]$ is a sparse objective.

Task Specifications derived from Formal Logic, Automata, etc

Will call a collection of task specifications a concept class.

Support incremental learning

Incrementally Learn smaller/simpler rules

Explictly support learning memory

Specifications have our desired properties

Desired Properties

- Decouple task from dynamics.

- Prefer sparse rewards.

- Support describing temporal tasks.

Abel, et al. "On the Expressivity of Markov Reward.", NeurIPS `21.

- Support composition.

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

Dynamics and Tasks

recipe

frame as inverse reinforcement learning

place holder

frame as inverse reinforcement learning

frame as inverse reinforcement learning

given a series of demonstrations, what reward, $r(s)$, best explains the behavior? (Abbeel and Ng 2004)

No unique explanatory reward!

Maximize Causal Entropy

subject to feature statistics.

(Ziebart, et al. 2010)Focus on reward matching

Maximize Causal Entropy

subject to $\mathbb{E}[r]$

- Intuition: Don't over commit to any particular strategy.

\[\Pr(\pi) \propto e^{\lambda\cdot \mathbb{E}[r(\xi)~\mid~ \pi]}\]

- Formally: Minimize worst-case excess bits to encode episode.

What should the reward be?

Satisfaction is a sparse objective.

Proposal: Use indicator.

\[\mathbb{E}[r] = \Pr(\xi \in \varphi)\]

Assigns a Demonstration Likelihood

Proxy policy exponentially favors high value prefixes

Will revisit.

Reward Learning offers many ways to rank specifications

- Inverse Optimal Control

- Daniel Kasenberg, Matthias Scheutz. "Interpretable apprenticeship learning with temporal logic specifications." CDC `17

- Glen Chou, Necmiye Ozay, Dmitry Berenson. "Explaining Multi-stage Tasks by Learning Temporal Logic Formulas from Suboptimal Demonstrations." RSS `22

- Bayesian Inference

- Ankit Shah, Pritish Kamath, Julie A. Shah, Shen Li. "Bayesian Inference of Temporal Task Specifications from Demonstrations." NeurIPS `18

- Hansol Yoon, Sriram Sankaranarayanan. "Predictive Runtime Monitoring for Mobile Robots using Logic-Based Bayesian Intent Inference." ICRA `21

- Maximum Entropy Inverse Reinforcement Learning

- Marcell Vazquez-Chanlatte, Susmit Jha, Ashish Tiwari, Mark K. Ho, and Sanjit A. Seshia. "Learning Task Specifications from Demonstrations." NeurIPS. `18.

- Marcell Vazquez-Chanlatte, and Sanjit A. Seshia. "Maximum Causal Entropy Specification Inference from Demonstrations." CAV `20

Key question: What memory?

Problem: No gradient to guide search over discrete structure.

Literature focused on Naïve syntactic search

Literature focused on Naïve syntactic search

Problems with syntatic search

- Conflates inductive bias with search efficiency.

- When teaching even harder to justify.

- Want generic strategy that works with less structured concept classes.

- Ignores the demonstrations!

Solution: Sparse reward + memory

Reach yellow. Avoid red.

Key question: What memory?

Enough memory to seperate good vs bad

Results in walk through labeled example space

Guide search by minimizing surprise

Guide search by minimizing surprise

Will see that $h$ factors through $R^n$

Will try to pull back changes prescribed by gradient.

Key idea 1: Reframe choices along demonstration

Many ways to deviate from demonstration

How valuable is each deviation?

Maximum Causal Entropy Policy

Find $\lambda$ to match $\Pr(\xi \in \varphi)$.

$\ln\sum_x e^x$ ↦ smax.

How valuable is each deviation?

Each deviation's value summarized by $V_\lambda, Q_\lambda$.

Reframe as conforming or deviating

$\mathbb{E}$ and $\text{smax}$ are associative and commutative

Two choices along a demonstration

$\mathbb{E}$ and $\text{smax}$ are associative and commutative

Two choices along a demonstration

Two choices along a demonstration

- Independent of number of actions and state

- Only need transition probabilities in demonstrations.

Key idea 2: View demonstration as a computation tree

Environment nodes average

Agent nodes smoothmax

Demonstrations map to computation graph

Surprise determined by pivot values.

Surprise factors through a function over pivot values.

Gradient of proxy surprise easy to compute.

Key idea 3: Toggle trace labels to manipulate pivot values.

Each pivot's subtree summarized by V

Each path given binary label by specification

Flipping value monotonically changes subtree value

Can sample from $\pi$ to generate high weight paths.

Flipping value monotonically changes subtree value

Flipping value monotonically changes subtree value

Surprise Guided Sampler

- Fix a candidate spec and compute proxy gradient.

- Define the pivot distribution, $D$ = softmax $\left (\frac{|\nabla \widehat{h}|}{\beta}\right)$.

- Sample a pivot, $k \sim D$ and a path, $\xi$, using the $\pi_\varphi$ such that:

- $\xi$ pivots at $k$.

- $\nabla_k \widehat{h} > 0 \iff \xi \in \varphi$.

- New label given by $\nabla_k \widehat{h} < 0$.

Candidate sampler can be any exact learner

Lifted in simulated annealing using example buffer

Lifted in simulated annealing using example buffer

Results in walk through labeled example space

Two Experiments

-

Incremental learning using 1 incomplete unlabeled demo

-

Monolithic learning using 2 complete unlabeled demos.

DISS able to quickly find probable task specs

Most probable DFA almost matches ground truth.

-

Incremental learning using 1 incomplete unlabeled demo

-

Monolithic learning using 2 complete unlabeled demos.

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

Dynamics and Tasks

Can generate pedagogic demonstrations

Helps Teach Conditional Rules

Recipe to generate pedagogic paths

Tasks induce a description length on demonstrations

Find likely paths given ground truth that have:

- Short description length given learned task distribution.

- Long description length given ground truth task.

Research Stack

Teaching tasks using demonstrations

Learning tasks from demonstrations

Maximum Entropy Planning

Summary

Random Bit Model

Idea: Model Markov Decision Process as deterministic transition system with access to $n_c$ coin flips.

Note: Principle of maximum causal entropy + finite horizon together are robust to small dynamics mismatches.

Random Bit Model

Next: Assume \(\#(\text{Actions}) = 2^{n_a} \)

Random Bit Model

Next: Assume \(\#(\text{Actions}) = 2^{n_a} \)

Random Bit Model

Unrolling \(\tau\) steps and composing with specification results in a predicate.

Random Bit Model

Proposal: Represent \(\psi\) as a Binary Decision Diagram with bits in causal order.

Random Bit Model

Proposal: Represent \(\psi\) as a Binary Decision Diagram with bits in causal order.

Maximum Causal Entropy and BDDs

Q: Can Maximum Entropy Causal Policy be computed on causally ordered BDDs?

Maximum Causal Entropy and BDDs

Q: Can Maximum Entropy Causal Policy be computed on causally ordered BDDs?

A: Yes! Due to:

- Associativity of \(\text{smax}\) and \(\mathbb{E}\).

- \(\text{smax}(\alpha, \alpha) = \alpha + \ln(2)\)

- \(\text{E}(\alpha, \alpha) = \alpha\)

Maximum Causal Entropy and BDDs

- Associativity of \(\text{smax}\) and \(\mathbb{E}\).

- \(\text{smax}(\alpha, \alpha) = \alpha + \ln(2)\)

- \(\text{E}(\alpha, \alpha) = \alpha\)

Maximum Causal Entropy and BDDs

- Associativity of \(\text{smax}\) and \(\mathbb{E}\).

- \(\text{smax}(\alpha, \alpha) = \alpha + \ln(2)\)

- \(\text{E}(\alpha, \alpha) = \alpha\)

Size Bounds

Q: How big can these Causal BDDs be?

Size Bounds

Linear in horizon!

Note: Using function composition, can build BDD in polynomial time.

Summary

Talk was biased towards Learning Contributions

-

Learn task specifications from (un)labeled and (in)complete demonstrations in a MDP.

- Support incremental and monolithic learning.

- Only needs blackbox access to a MaxEnt planner and Concept Identifer.

Contributions

- Learn task specifications from (un)labeled and (in)complete demonstrations in a MDP.

- Support incremental and monolithic learning.

- Only needs blackbox access to a MaxEnt planner and Concept Identifer.

- Can automatically generate pedagogic demonstrations.

- Efficient MaxEnt planning in symbolic Stochastic Games.

- Marcell Vazquez-Chanlatte, Susmit Jha, Ashish Tiwari, Mark K. Ho, and Sanjit A. Seshia. "Learning Task Specifications from Demonstrations." NeurIPS. `18.

- Marcell Vazquez-Chanlatte, and Sanjit A. Seshia. "Maximum Causal Entropy Specification Inference from Demonstrations." CAV `20

- Marcell Vazquez-Chanlatte, Ameesh Shah, Gil Lederman, and Sanjit A. Seshia. "Demonstration Informed Specification Search" In submission.

- Exact Probablistic Model Checking of Finite Markov Chains

- Sebastian Junges, Steven Holtzen, Marcell Vazquez-Chanlatte, Todd Millstein, Guy Van den Broerk, Sanjit A. Seshia. "Model Checking Finite-Horizon Markov Chains with Probabilistic Inference", CAV `21

By no means solved

Clear path to scaling up

- Need estimate of policy on prefix tree.

- Need a way to sample likely paths from policy.

Plays nicely with approximate methods

- Monte Carlo Tree Search (e.g., Smooth Cruiser [1])

- Function Approximation (e.g., Soft Actor Critic [2])

Promising preliminary results using Graph Neural Networks

Works with any supervised learner

Can in principle do Decision Trees and Symbolic Automata.

Works with any supervised learner

Could use natural language or other signals for priors.

Working on variant for constraints

Fix the reward and infer constraints on behavior.

Multi-agent (inferred) assume guarantee reasoning underexplored

\[ A \implies G\]

Maximum causal entropy correlated equillibria

seem like an interesting model

Ziebart, et al., "Maximum causal entropy correlated equilibria for Markov games." AAAI `10.

Thank you

Thank you

Thank you

Slides: mjvc.me/phd